Abstract

Organisations are drowning in information, but starving for usable insight: non-existent IM strategies, digital landfills, scattered physical storage, inconsistent practices, and fragmented platforms are just some of the problems organisation leaders can’t see the true cost or value of the information their teams create every day. That matters because unchecked information problems create legal and operational risk and block strategic decisions. Measuring IM maturity through an audit isn’t an academic exercise in documenting problems, it’s the first step on the road to creating organisation-wide change for the better.

This article has been written in the lead up to ‘Beyond Boundaries’, ALGIM’s Annual Conference 2025, held from 25th to 27th November 2025 at the Tākina Conference Centre, Wellington. I will be hosting a workshop, Starting the IM Journey – Helping Council Staff Discover the Value in Information Management on the Tuesday. On the Wednesday I am presenting, From Audit to Action – IM Maturity Assessments & Tools That Work. It is this latter presentation that guides the content for this article, continuing the discussion and helping you to get the most out of an IM Maturity Assessment.

A Quick History

You can’t master what you don’t measure; that’s why IM Maturity assessments matter

The ability to measure our capabilities and shortfalls, rectify gaps and create solutions, across a discipline has been a relatively modern development. The roots of the modern information management maturity assessment (IMMA) hark back to production by our IT colleagues. Carnegie Mellon University’s Software Engineering Institute Capability Maturity Model was released in 1987 as a five-level framework for improving software development processes, later evolving into the more detailed Software CMM, with an integrated CMMI model released in 1997.[1] Its success is still seen today, with the 5-level framework still widely adopted. It also helped spark a wide range of other maturity models and standards, with a branch out into other governance disciplines in the 2000s. One such framework was the ARMA International Information Governance Maturity Model (IGIM). Tied to its Generally Accepted Recordkeeping Principles, the IGIM was designed to bring together the various stakeholders across an organisation that have an interest in best-practice for managing information effectively. It was also an attempt to bridge the language barrier that we commonly encounter as IM professionals working with other specialists. While the ARMA Principles are a “good start”, they are very high-level and are not meant to replace the minimum legislative requirements for information management in a given country.

A Quick Note on Definitions, Too…

Before we go on, it’s important to understand and keep in mind these definitions:

Information Management Audit: A formal, evidence-based review required by legislation that checks an organisation’s IM practices for compliance with statutory obligations and internal policy; it follows an independent audit methodology and specific audit questions.

Information Management Maturity Assessment (IMMA): A diagnostic framework that measures the capability and performance of IM across defined categories and topics, producing scores and findings to guide improvements.

How they fit together: Auditors use IMMA findings and evidence to inform their enquiries, but the IMMA is one tool in the toolbox of assessment. The IM audit remains a separate, legislated compliance process with its own questions and judgement.

Formative Years In NZ

So, what are the legislative requirements New Zealand public offices and local authorities need to meet? And how did it lead to the creation of the audit process used by Archives New Zealand, and the IMMA framework?

The Public Records Act 2005 (PRA) established a new regulatory regime for records management across New Zealand public offices. The Act empowers the Chief Archivist and Archives New Zealand | Te Rua Mahara to issue standards, monitor compliance, conduct audits, and require public offices to maintain and dispose of public records.[2] The important parts of the Act are covered within sections 27 to 29 and are important context to understand, enabling the audit process and the creation of the Archives New Zealand IMMA. Below are the relevant sections of the PRA (italics are my own words):

Section 27:

- Every standard must say who it applies to and whether following it is mandatory or optional.

- The Chief Archivist can create standards about public and local authority records – How they’re created, kept, appraised for disposal, and public access to them. These can change over time.

Section 28:

- Covers what information standards must include, such as:

- Particular records (eg. List of Protected Records)

- Best-practice to follow (eg. Best practice Guidance on Digital Storage & Preservation)

- Minimum acceptable standards (eg. Information and Records Management Standard, Minimum Metadata Requirements for Creation & Disposal, etc.)

Section 29:

- The Chief Archivist has the right to inspect the records & archives of public offices and local authorities

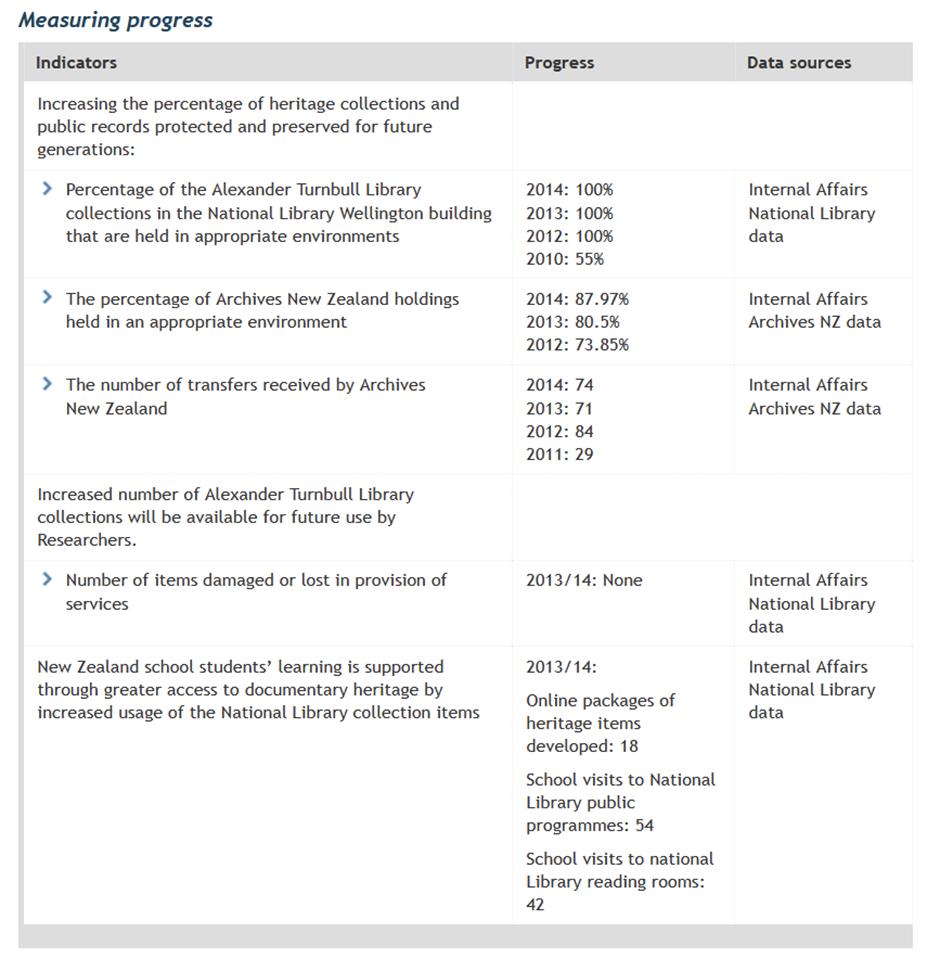

Archives New Zealand ran the first round of audits across the public sector from 2011 to 2014, with 169 public offices audited by one of the “Big Four” during this first cycle. As can be seen by the screenshot below, there was a flurry of activity associated with this first round of Archives New Zealand audits, especially in the section, The number of transfers received by Archives New Zealand.[3]

Several public offices make note within their annual reports during these formative years that they were audited by Archives New Zealand during the financial year, but none provide scoring, nor mention of a specific framework used in the audit process. In 2014/15, after the first round of audits were completed, Chief Archivist Greg Goulding expressed concern about the low level of maturity of recordkeeping in more than half of the public offices audited under the PRA. [4] This led to a programme of work to optimise Archives New Zealand’s regulatory function. It included the issuance of the mandatory Information and Records Management (IRM) Standard, along with a formal Regulatory statement, the revision of core guidance material and the embedding of the Executive Sponsor role in public offices and local authorities. You could say that the audit process itself was maturing.

While this provided a solid bedrock from which to build, there was still plenty of work to be completed by Archives New Zealand. As Acting Chief Archivist, Richard Foy, stated in the The Chief Archivist’s Report on the State of Government Recordkeeping 2016/17:

We identified the need for a refreshed approach to monitoring to ensure we have a more joined-up, comprehensive picture of sector capability and performance. A new framework should ensure we target our efforts; achieve better coverage of the sector; put our tools to best use; and more effectively measure the progress of government information management over time… Work to establish this monitoring framework is underway and expected to be completed during the 2017/18 year. It will include a plan for how the next audit cycle of public offices will be carried out.[5]

A wero had been initiated by the Chief Archivist for Archives New Zealand and the IM community.

A New Era

The taki was taken up by Heather McKay and Miranda Welch of Archives New Zealand, with assistance from Kerri Siatiras of Siatiras Consulting Limited. A new IMMA framework was built on the Public Records Office of Victoria’s (PROV) IM3 tool. The PROV IM3 tool was developed in 2013 and the first IM3-based assessment programme, IMMAP, was launched by PROV in January 2016, providing relevant feedback and learning inputs to the new IMMA.[6]

As part of the trend towards openness and transparency within government, the 2017/18 State of Government Recordkeeping stated, “We will make audit and survey results available, so that the New Zealand public can more readily understand the state of government information management.”[7] It was the standardisation of the new IM Maturity Assessment across categories, topics and individual requirements at a high level that allowed the audit process to have quantifiable outcomes and benchmarks into the future. The aim of the new audit programme was to assess 40 to 50 public offices per annum using the new IMMA, with a target of approximately 200 audited by the end of the 5-year program. Results from the audit process were to be tabled in Parliament annually before publication, which would include the Chief Archivist’s commentary, trends in IM and individual case studies accompanying public office scoring using the IMMA framework. All data was to simultaneously be made publicly searchable on the Archives New Zealand website and via the New Zealand Government Data site.

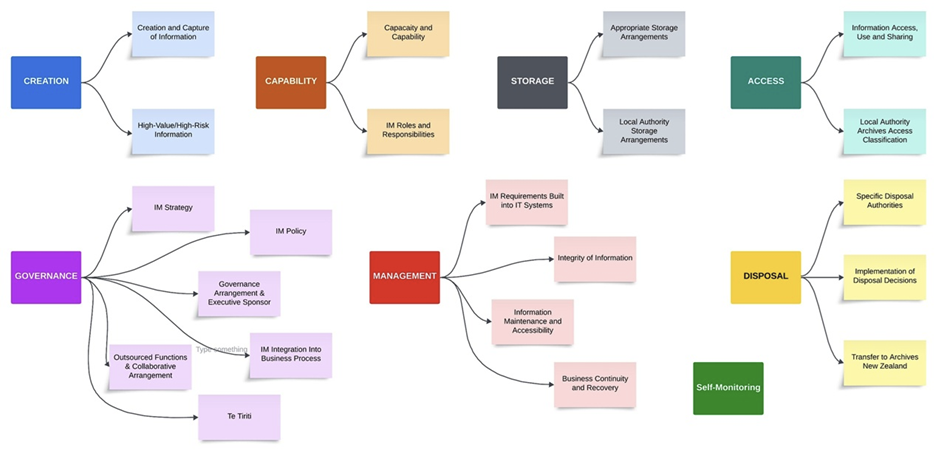

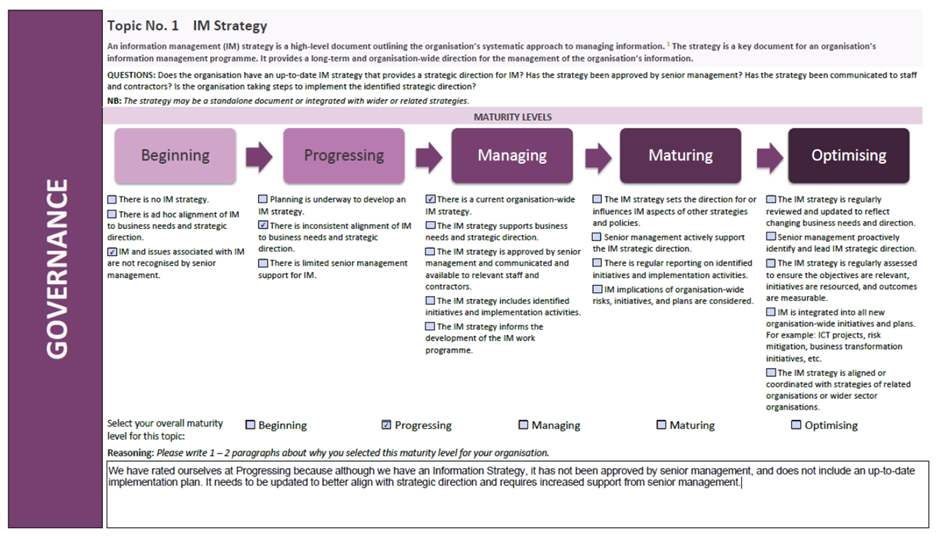

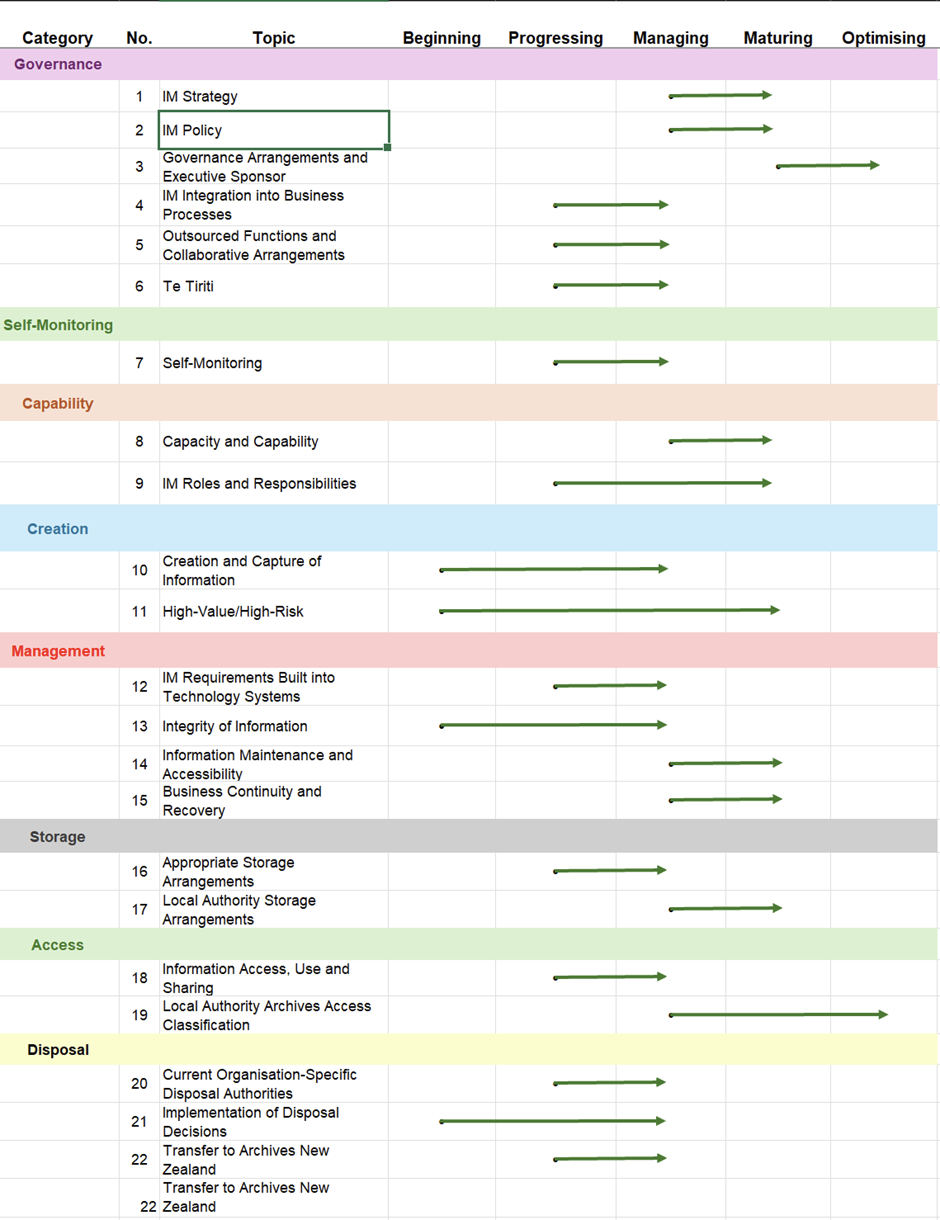

The 2020/21 State of Government Recordkeeping describes the development and rollout of the annual audit process, use of the new IMMA and the publication of PRA audit reports.[8] The IMMA looks at eight categories and assesses up to 22 topics related to IM practice. As each public office was notified well in advance before an audit took place, it was expected that a self-assessment utilising the IMMA template by IM staff within the public office would take place before auditors arrived to conduct the formal assessment. The IMMA sought to increase uptake by local authorities and leverage increased flexibility through the inclusion of two sections specific to local authorities (Local Authority Storage Arrangement and Local Authority Archive Access Classification topics). The infographic below shows individual categories (darker colours) and the topics incorporated within them (lighter colours):

Each topic begins with a definition, followed by questions organisations need to ask themselves before making comment and conducting scoring. At the bottom of each section is a “Reasoning” open-text box on why the organisation chose that scoring level, and to include evidence or make other notes:

During an IM audit, auditors use various social-science methodologies to gather evidence. This includes document reviews, interviews with key staff and the Executive Sponsor, focus groups, and inspections of physical storage and digital platforms. The IMMA, which holistically assesses capability across the organisation, helps support this work by providing a consistent baseline of scores, revealing gaps and making observations that can point auditors toward areas requiring closer attention. The IMMA does not replace the audit itself; auditors still follow their own legislated methodology and ask additional questions as required.

Once the fieldwork is complete, a draft audit report is provided to the public office for review and comment. The organisation has a formal right of reply before the findings are finalised. The Chief Archivist then issues the final letter and the report is published. Afterward, any follow-up actions, often developed with Archives New Zealand, are tracked through to completion. The full process usually takes about 12 months, though it can take longer if more substantial follow-up is needed.

Results

The results from the 2021 to 2025 round of public office audits show a slowly increasing trend of IM maturity for public offices.

When the first round of audit results rolled in in 2021, only 5 out of 28 (28%) public offices were at Managing (minimum requirements) or higher for the majority of the topics scored.[9] In the latest round of results, 7 out of 23 (31%) audited public offices were at a Managing level or higher across the majority of topics.[10] The Report: State of Government Recordkeeping and Public Records Act 2005 Audits 2014/15 says,

It is disappointing to see that, although the Act came into force 10 years ago, barely half of the public offices audited in 2014/15 have recordkeeping maturity at or above the level of a managed approach to records management.

This was followed by the line in the 2020/21 report stating, “With just one year of the refreshed audit programme completed we are seeing similar results”.[11]

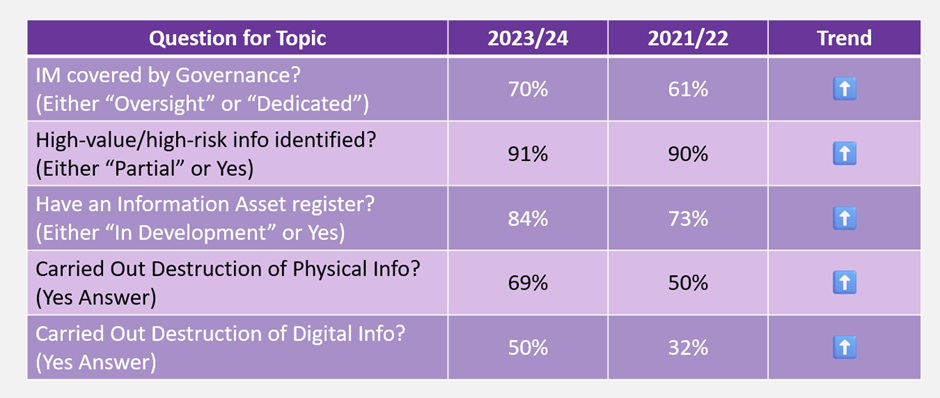

While the individual IMMA results and the annual Report on the State of Government Recordkeeping paint a sombre picture of IM Maturity, a light at the end of tunnel exists when reading the parallel Survey of Public Sector Information Management. As with the Report on the State of Government Recordkeeping, the Survey of public sector information management monitors key indicators and provides a high-level perspective on whether information management is improving, declining, or remaining stable in New Zealand. Below is a brief table showing progress across multiple topics from 2021/22 to 2023/24:

2025 Update

After asking for user feedback, in September 2025 minor updates and improvements to the Information Management Maturity Assessment were released. These changes include:

- better alignment with the requirements of the mandatory IRM Standard

- editing or removing duplicate or conflicting descriptor statements

- adding statements that clarify the Executive Sponsor’s role and responsibilities

- adding statements about appraisal and sentencing processes

In addition, Archives New Zealand has confirmed that it will continue to embed the Information Management Maturity Assessment across public offices and local authorities; provide a clear expectation that a “Managing” level of IM maturity is the minimum standard; and is focused on developing further enhancements to the IMMA identified through its use and user feedback. A new KPI for audits by Archives New Zealand has been added by Government – the percentage of agencies that meet or exceed managing level on the Information Management Maturity Assessment is to be at least 20% of new assessments.[12]

Finally, sections 34 and 35 of the Public Records Act require the commission of an Independent Audit of the Recordkeeping Practices of the Chief Archivist at a minimum of every 10 years. This audit of the Chief Archivist took place in 2024, with a summary report, recommendations and an action plan developed in 2025. At time of writing, it is expected that the results will be tabled in the House of Representatives by the end of the year.

Adding Value to the Audit Process

So, now that we have a handle on the IMMA’s history and development in mind, what can we do beyond using the Archives New Zealand IMMA? How can we add value to the audit process and modify it appropriately for our organisation’s purposes? In this section I’ll detail ways you can build on this strong bedrock, as well as tips and tricks when undertaking your own self-assessment audit.

Survey & Interview Questions

As noted above, part of the audit process is communicating with staff within all parts of the organisation to comprehensively understand IM practice and processes in order to score each topic accurately. How do we go about doing this though? I adopt a social sciences mixed-methods research methodology when undertaking IM maturity assessments for clients, and the staff survey & interview section falls under a qualitative method. This includes interviews with the Executive Sponsor and general managers to assess functions leading to information creation, use and reuse, and management. I also craft an all-staff survey from a template of over 300+ questions, each backed by a specific IM topic, to obtain feedback on identified issues.

Part of the nuance of conducting surveys and interviews is asking targeted questions of all levels of staff after you have secured initial documentation and findings. For example, there is no point in asking a Hydrologist at a regional council what they think of the council’s IM policy and how it pertains to their work if, later on, you find out no IM policy actually exists! The questions that you put to staff should be based upon individual IM topics that need further commentary or evidence to justify scoring. They also need to be targeted to your audience and strike the right tone. Here is an example of interview questions asked of an Executive Sponsor:

- How do you champion information and records management at the leadership level, ensuring it is seen as an integral part of business operating effectively? (Strategic Leadership & Vision section)

- What governance structures or committees will you establish to provide clear oversight and accountability for information management? (Governance, Oversight, and Resources section)

- What approach do you take to identify, assess, and mitigate risks to information assets, especially high-value or high-risk records, as recommended by the Archives NZ standard? (Legal Compliance and Risk Management section)

Now, compare this to questions in an all-staff survey:

- Are there specific areas of IM where your team would benefit from more expert guidance (e.g. retention/disposal, privacy handling, metadata tagging, system use)? (Capability section)

- How confident are you that high-value/high-risk information in your area is protected from loss, damage, or unauthorised access? (High-Value/High-Risk Information section)

- Has your business unit identified any records or data classified as protected information or local authority archives requiring long-term preservation? (Local Authority Storage Arrangements for Protected Information and Local Authority Archives)

Surveys and interviews are also very useful for backing up your own findings. Answers from staff can also provoke you to challenge your findings and provide you with a reason to re-check/cross-check information. You will be amazed at the range of thoughts, opinions and great ideas that come from staff when you ask them engaging questions!

Evidence for Scoring/Commentary

An example of a detailed research method, I have built a template to expand on the simple open-text box on the Archives New Zealand IMMA pdf file. This records documents that can be pointed at for each individual topic as evidence of good (or bad) practice. Any gaps in documentation can also be recorded, along with owners and ideas for organisation/department/team documentation that would enhance processes and lead to higher scoring in future assessments.

Comprehensive Scoring & Recommendations

This template is where we pull together all of the information we have collated, storing it in a “single source of truth”. Each category and topic is mapped out with the following columns:

- Current IMMA score

- Comments on the specific topic

- Action points to consider

- Projected outcomes from actions points

- Forecasted future scoring on the IMMA after action points are completed

- Owners of the action points and follow-up projects

- Estimations of difficulty and resourcing for projects

A useful column I have also added is “Archives NZ supporting document to achieve”. This column incorporates support documents from Archives New Zealand’s A-Z List of Guidance directly supporting a topic. I have also added another tab for Recommendations by Category. with relevant focus points and top 3 recommendations, should executives desire to see the project presented in such a fashion.

Building a Project Dashboard

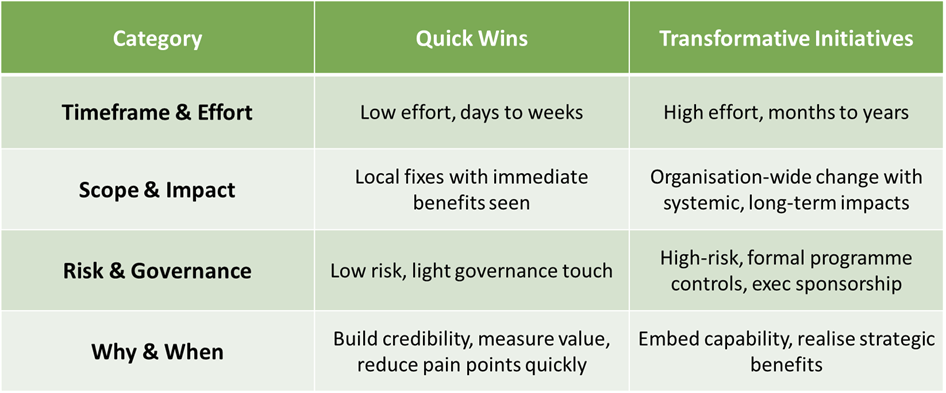

A well-structured project dashboard allows your stakeholders to track immediate progress alongside long-term change. It is also a way to tell your story in a succinct and targeted way. Within the dashboard, differentiating between quick wins and transformative initiatives is key. Why does this differentiation matter?

Quick wins focus on relatively fast, low-cost fixes that remove barriers and prove the value of information management to staff. These interventions can be delivered in days or weeks, target specific teams or processes, and require a light touch from an oversight perspective. They are designed to reduce friction and build credibility for IM, creating measurable improvements to the day-to-day functions of the organisation and increase buy-in from other departments and teams.

By contrast, transformative initiatives are strategic, organisation-wide efforts that reshape how information is created, stored, used and re-used. These initiatives are more demanding on organisation resources and capability, requiring sustained investment over months or years. They bring about systemic change across people, process and technology, while also carrying higher risk. They may also require one or more quick wins as prerequisite for them to begin. As such, they require formal programme controls and visible executive sponsorship.

While quick wins deliver rapid relief and momentum, transformative programmes embed capability and unlock long-term strategic benefit. Make sure to know the difference – every large project begins with one small step!

Scoring Chart

Finally, a picture tells a thousand words, especially when you can make it look great on a digital platform. A comprehensive scoring chart shows all categories and topics in the IMMA, along with forecasted scoring in 12-36 months once all action points are completed. It is also a useful way to show the future direction of the organisations’ IM maturity.

The Final Report

The grand finale of the entire audit process! The final report matters far more than simply wrapping things up. It is your opportunity to turn weeks of interviews, surveys, scoring and analysis into a compelling narrative that decision-makers can actually use. Executives and budget holders don’t want raw data, they want a clear story that explains where the organisation currently stands in the IM environment, why it matters, and what it will take to move IM topics to the Managing level or beyond. A well-structured report will include a one-page executive summary, findings and recommendations by topic, and future projections. This is your chance to provide your stakeholders with a digestible roadmap rather than a pile of disconnected insights.

The final report also serves as the organisation’s first and best reference point after the auditing dust settles. With sections covering staff feedback, leadership perspectives, category-by-category findings, and top recommendations, the final report documents the current state in a way that anyone can revisit. The inclusion of projected future outcomes and a clear methodology assists executives to understand that maturity improvement isn’t just about tidying up folders and dusting off London boxes – it’s about placing a high value on all of the IM work, regardless of format, going on within the organisation the run.

A well-written report makes the dry world of metadata, governance and storage conditions feel accessible. It gently nudges those holding the purse strings to see IM not as a cost centre but as an efficiency engine and strategic enabler of staff. The final report isn’t just the end of an audit, it’s the beginning securing funding for IM projects that can make a positive difference.

Gazing Into the Future

What possible future path may see trod in the audit sector? As a speculator, it is easier to predict in the short-term what will become reality – the further out you attempt to predict on a timeline, the more variables come into play and the harder it is to get right.

I can foresee the blending of IM audits with other audit types becoming a routine exercise for public offices. New Zealand is already starting to formalise maturity models in related areas. For example, the new Cyber Security Capability Maturity Model (CS‑CMM) sets a minimum maturity (Level 2: Planned and Tracked) for all agencies, and encourages progress to Level 3 (Standardised).[13] Likewise, Statistics New Zealand’s Government Chief Data Steward rolled out a Data Maturity Assessment in 2023 to score agencies’ data practices.[14] The Privacy Maturity Assessment Framework (PMAF), which explicitly aligns with information management and security domains, was also refreshed 2020-2021 to provide privacy leadership to the public service.[15]

In the near future, we may see these strands converge into a single digital‑governance maturity framework. For instance, an integrated scorecard could pull in IM metrics alongside cybersecurity and data governance ratings, giving a holistic view of an agency’s digital health. This process would make it easier to identify overlapping risks (e.g. data leaks or lost records) and streamline assurance work. In practice, agencies might use shared audit tools or dashboards that combine questions from archives, IT security, and data governance standards, so that one audit yields insight on all fronts. That’s all before we start adding in AI and automation, but that’s a discussion for another day…

Acknowledgements

If you made it this far, thank you for reading! Also, thank you to the following individuals for their feedback, commentary and editing of this document:

- Richard Foy – President, ARANZ, and Kaiwhakahaere, Pūnaha Mōhio, Te Puni Kōkiri

- Kerri Siataris, owner of Siataris Consulting

Feel free to leave a comment below, or contact me to see how I can help you with conducting a comprehensive audit using the Archives New Zealand IMMA. You can download this document using the link below.

Endnotes

[1] Carnegie Mellon University. 2006. “A History of the Capability Maturity Model for Software.” KiltHub. https://kilthub.cmu.edu/articles/journal_contribution/A_History_of_the_Capability_Maturity_Model_for_Software/6620666

(accessed December 11, 2025).

[2] Archives New Zealand. n.d. “Information Management Maturity Assessment.” Archives New Zealand – Manage Information / How We Regulate / Monitoring and Audit. https://www.archives.govt.nz/manage-information/how-we-regulate/monitoring-and-audit/information-management-maturity-assessment (accessed December 11, 2025).

[3] Department of Internal Affairs. 2014. “Part A.” DIA Annual Report 2014. https://www.dia.govt.nz/annualreport_2014/part-a.html (accessed December 11, 2025).

[4] Archives New Zealand. 2019. Chief Archivist’s Annual Report on the State of Government Recordkeeping 2019–20.

https://www.archives.govt.nz/files/etfoy87fj9he/1klU9QVQ4O5ejaqaLTbXXM/b6a3719fe1e4d222a8cee19433f849dd/Chief_Archivists_Annual_Report_on_the_State_of_Government_Recordkeeping_201920.pdf (accessed December 11, 2025).

[5] Archives New Zealand. 2017. Report: State of Government Recordkeeping 2016–17. https://www.archives.govt.nz/files/etfoy87fj9he/19PXlxScs4osYkRITd7gpf/0392b116973b1aa2be3d1b13138786a2/report_-_state_of_government_recordkeeping_2016-17.pdf (accessed December 11, 2025).

[6] Public Record Office Victoria. n.d. “Information Management Maturity Assessment Program (IMMAP).” Public Record Office Victoria. https://prov.vic.gov.au/recordkeeping-government/research-projects/information-management-maturity-assessment-program-immap (accessed December 11, 2025).

[7] Archives New Zealand. n.d. Report on the State of Government Recordkeeping 2017–18. https://www.archives.govt.nz/about-us/publications/report-on-the-state-of-government-recordkeeping/report-recordkeeping-2017-18 (accessed December 11, 2025).

[8] Archives New Zealand. 2021. Chief Archivist’s Report 2020–21. https://www.archives.govt.nz/files/etfoy87fj9he/5AfW4SHPxkaAqyWyvS0UCK/b0b0d66c7d841c766c1ae6d4d4e11732/Chief_Archivists_Report_2020-21.pdf (accessed December 11, 2025).

[9] ibid

[10] Archives New Zealand. 2024. Report on the State of Government Recordkeeping 2023–24. https://www.archives.govt.nz/files/etfoy87fj9he/6ITJlgQ2OwQhcKE3zQ6RLr/5001a342f6861d506122aaabf5605bd7/report-on-state-of-government-recordkeeping-2023-24.pdf (accessed December 11, 2025).

[11] ibid. Archives New Zealand. 2021. Chief Archivist’s Report 2020–21.

[12] New Zealand Treasury. 2025. “Vote: International Affairs and Participation.” New Zealand Budget 2025.

https://budget.govt.nz/budget/2025/by/vote/intaff.htm (accessed December 11, 2025).

[13] National Cyber Security Centre NZ. n.d. “Capability Maturity Model.” NCSC NZ. https://www.ncsc.govt.nz/protect-your-organisation/capability-maturity-model (accessed December 11, 2025).

[14] data.govt.nz. n.d. “Data Maturity Assessment.” data.govt.nz. https://data.govt.nz/leadership/data-maturity-assessment (accessed December 11, 2025).

[15] Department of Internal Affairs / Digital.govt.nz. n.d. “What Is the Privacy Maturity Assessment Framework (PMAF)?” Digital.govt.nz. https://www.digital.govt.nz/standards-and-guidance/privacy-security-and-risk/privacy/privacy-maturity-assessment-framework-pmaf-and-self-assessments/learn-about-pmaf-2/what-pmaf-is (accessed December 11, 2025).

One response to “Measuring What Matters: IM Maturity & Auditing”

-

[…] and want to make their lives, and the lives of those around them, easier. Maybe they’ve dealt with an audit finding, a messy OIA/LGOIMA request, or a project that went sideways because no one could find the right […]

This article is licensed under a Creative Commons 4.0 Attribution Non-Commercial license. Please attribute: Evan Greensides, EG Consulting, December 2025.

Leave a Reply